The Enterprise Executive's Definitive Guide to AI Voice Agents in 2026

Everything C-suite leaders need to know about AI voice agents — from business case to implementation roadmap.

A technical and strategic deep-dive into the capabilities, design philosophy, and enterprise infrastructure that makes Ringlyn AI the platform of choice for organizations deploying conversational AI at scale.

Utkarsh Mohan

Published: Feb 17, 2026

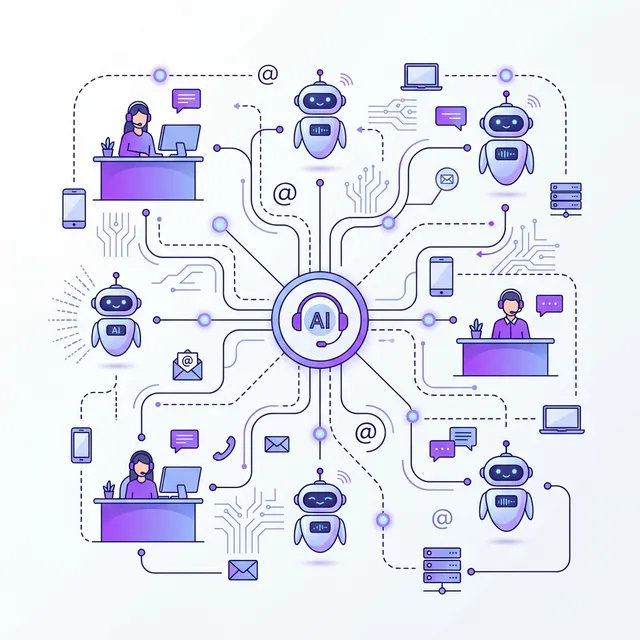

Ringlyn AI was designed with a single governing principle: enterprise organizations deploying conversational AI at scale should not have to choose between capability and reliability. The platform architecture reflects this principle at every layer — from the multi-LLM orchestration engine that powers intelligent conversations to the compliance infrastructure that satisfies the requirements of Fortune 500 legal and security teams.

This document provides an authoritative overview of the Ringlyn AI platform for technology leaders, enterprise architects, and procurement teams conducting technical due diligence.

Many AI voice platforms were built for consumer or developer use cases and subsequently adapted for enterprise requirements. Ringlyn AI was architected in the opposite direction: enterprise scalability, compliance, and integration depth were first-order design requirements, not features added in response to customer feedback.

The practical implication is that Ringlyn AI does not require enterprise customers to work around platform limitations that were designed for simpler use cases. The platform's native capabilities — multi-LLM routing, elastic concurrent call scaling, granular access controls, comprehensive audit logging, and deep CRM integration — are available to all enterprise customers without custom development or special licensing.

The Ringlyn AI conversation engine is a purpose-built LLM orchestration layer that manages the complete intelligence lifecycle of every call: intent understanding, context maintenance, knowledge retrieval, response generation, and action execution.

Ringlyn AI supports native integration with leading LLM providers — OpenAI, Anthropic, Google, and open-source model deployments — and routes conversational tasks to the most appropriate model based on configurable criteria: task complexity, latency requirements, cost targets, and data residency constraints. Enterprise customers can configure routing policies that optimize for their specific operational requirements.

Enterprise AI voice agents are only as capable as the knowledge they can access. Ringlyn AI's retrieval-augmented generation (RAG) architecture enables agents to query structured and unstructured enterprise knowledge bases in real time during active calls — returning precise, contextually appropriate information from product documentation, policy repositories, customer records, and operational systems without hallucination risks associated with LLM knowledge limitations.

Enterprise customers require complete control over how their AI agents reason, respond, and represent their brand. Ringlyn AI provides a comprehensive prompt configuration interface that allows enterprise teams to define agent personality, communication style, topic boundaries, escalation triggers, compliance disclosures, and response format requirements — without code, through a visual configuration interface designed for business users.

Ringlyn AI's voice layer integrates best-in-class automatic speech recognition with neural text-to-speech synthesis to deliver voice interactions that are indistinguishable from human representative conversations in independent listener evaluations.

The value of an AI voice agent is multiplied by the depth of its connection to the enterprise systems of record that contain customer context, operational data, and business logic. Ringlyn AI's integration architecture is designed to connect to any enterprise system through multiple pathways:

| Integration Type | Supported Systems | Capability |

|---|---|---|

| Native CRM Connectors | Salesforce, HubSpot, Microsoft Dynamics, Zoho | Read/write customer records, trigger workflows, log call outcomes in real time |

| Helpdesk Integration | Zendesk, ServiceNow, Freshdesk, Intercom | Create tickets, update case status, retrieve ticket history during active calls |

| Calendar & Scheduling | Google Calendar, Outlook, Calendly, Acuity | Real-time availability lookup, appointment creation and modification, confirmation messaging |

| Telephony Platforms | Twilio, Vonage, Amazon Connect, Genesys | SIP trunking, phone number management, call routing and transfer |

| Data & Analytics | Snowflake, BigQuery, Databricks, Looker | Real-time data retrieval, post-call analytics export, BI dashboard integration |

| Custom Systems | Any REST API or webhook-compatible system | Configurable HTTP actions triggered by conversation events or agent decisions |

Ringlyn AI integration ecosystem as of Q1 2026

Enterprise deployments in healthcare, financial services, insurance, and government-adjacent sectors require a compliance posture that most voice AI platforms cannot credibly deliver. Ringlyn AI's compliance architecture was designed to meet the requirements of the most demanding regulated industry deployments:

Every call handled by a Ringlyn AI agent generates a structured dataset that enterprise intelligence teams can use to continuously improve customer experience, optimize agent performance, and identify business opportunities that would be invisible in traditional contact center environments.

Ringlyn AI is available as a fully managed cloud service, with dedicated infrastructure options for enterprises with data sovereignty requirements. Enterprise customers receive:

Meet with our enterprise solutions architecture team to discuss your specific deployment requirements

Most enterprise deployments can be completed in 4–8 weeks from contract signature to production launch, depending on integration complexity. A single-use-case pilot with standard CRM integration typically completes in 2–3 weeks. Multi-system integrations, custom voice persona creation, and complex workflow configurations add time but are supported by Ringlyn AI's dedicated implementation team.

Yes. For enterprises with strict data sovereignty requirements, Ringlyn AI offers dedicated cloud deployment on customer-controlled AWS, Azure, or GCP environments, as well as on-premises deployment in enterprise data center environments. These options require engagement with Ringlyn AI's enterprise solutions team to scope infrastructure requirements and deployment architecture.

Ringlyn AI's multi-region infrastructure provides automatic failover for infrastructure failures. The multi-LLM routing layer provides model provider redundancy — if a primary model provider experiences degraded service, traffic is automatically routed to a secondary provider without conversation interruption. Enterprise customers receive proactive incident communication and post-incident root cause analysis for all P1 events.

The Ringlyn AI platform is designed for business users as well as technical teams. Non-technical users can configure conversation flows, manage agent personas, review analytics, and make operational adjustments through the visual interface. Technical users have access to full API and webhook configuration for advanced integrations. Enterprise onboarding includes structured training for both user groups, with documentation and video resources for ongoing reference.

Everything C-suite leaders need to know about AI voice agents — from business case to implementation roadmap.

A head-to-head analysis of ten leading voice AI platforms evaluated against enterprise deployment criteria.

How leading enterprises are restructuring customer service around conversational AI.