The Empathy Architecture: How AI Voice Agents Are Outperforming Human Representatives

New enterprise data reveals a counterintuitive reality: AI voice agents are consistently outscoring human representatives on customer empathy benchmarks.

The definitive operational guide for enterprise customer experience leaders deploying conversational AI at scale — covering technology selection, workforce redesign, measurement frameworks, and the organizational change management required to lead the transformation.

Divyesh Savaliya

Published: Feb 17, 2026

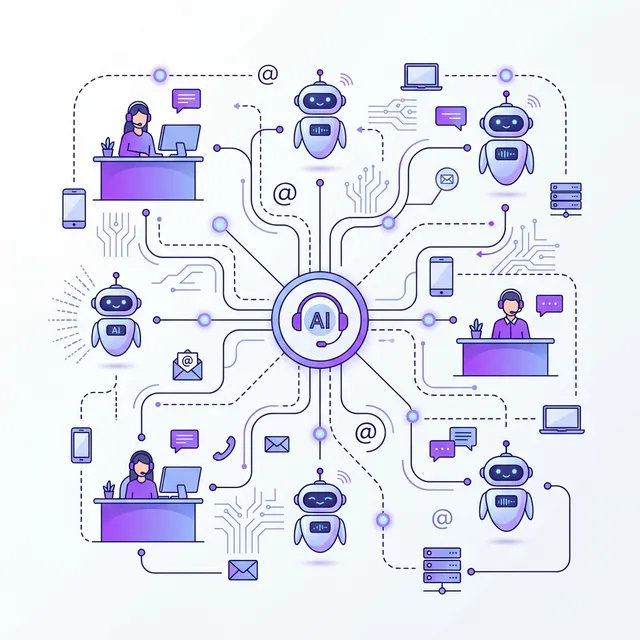

Enterprise customer service is at a structural inflection point. Gartner projects that more than 50% of enterprise contact center volume will be handled by conversational AI by 2027 — a forecast that seemed aggressive when published and now appears conservative given the pace of deployment across sectors. For CX leaders, the question is no longer whether conversational AI will transform their operations. It is whether they will lead that transformation or respond to it.

This blueprint addresses the complete operational challenge: not just the technology, but the organizational design, measurement frameworks, workforce strategy, and change management required to deploy conversational AI in a way that genuinely improves customer experience rather than simply reducing headcount.

Customer expectations have been reshaped by a decade of digital-native brands delivering instant, personalized, always-available service. The enterprise customer of 2026 expects:

Traditional contact center models — built on human agent pools, geographic constraints, scheduled shifts, and point solutions — are structurally incapable of meeting these expectations at enterprise scale. Conversational AI is the only mechanism by which enterprise organizations can genuinely close the gap between customer expectations and operational reality.

Grounded in data from enterprise deployments rather than vendor marketing, conversational AI delivers measurable improvements across five CX dimensions:

| CX Dimension | Traditional Model Performance | Conversational AI Performance | Source |

|---|---|---|---|

| Average speed to answer (ASA) | 3–7 minutes | < 3 seconds | Industry benchmark composite |

| After-hours availability | Limited or none | 100% (24/7/365) | Platform capability |

| First-contact resolution rate | 64% | 71% | Ringlyn AI deployment data |

| Customer satisfaction (CSAT) | Baseline | +12–18pp improvement | Enterprise deployment composite |

| Cost per resolved interaction | $6–$12 (human) | $0.10–$0.25 (AI) | TCO analysis, 2025 |

| Call abandonment rate | 18–25% | < 2% | Enterprise deployment composite |

| Data capture accuracy (post-call) | 60–70% (manual entry) | 99%+ (automated) | CRM integration audit data |

Performance data from enterprise conversational AI deployments. Results vary by implementation quality and use case.

The most effective enterprise conversational AI deployments are not pure AI replacements of human agents — they are carefully designed hybrid models that allocate each interaction type to the handler best positioned to resolve it efficiently and satisfyingly.

High-volume, well-defined interactions where the resolution path is clear and the customer's primary value driver is speed and availability. Examples: appointment scheduling, account inquiries, order status, FAQ responses, payment processing, outbound reminders and confirmations, lead qualification. These interactions should be AI-first, with human escalation available but rarely required.

Moderate-complexity interactions where AI handles the initial intake, context gathering, and preliminary qualification before transferring to a human agent with full context. The human agent receives a structured handoff — caller identity, account status, stated issue, and sentiment — and can begin resolution without any information gathering. AI-assisted handoffs reduce average handle time for human agents by 30–40%.

High-complexity, high-stakes, or relationship-critical interactions that require human judgment, empathy, and accountability. Examples: complaint escalations, large commercial transactions, legally sensitive situations, interactions with identified high-value customers with specific relationship requirements. These interactions should be routed directly to skilled human agents, ideally the same representative who has a history with the customer.

Not all automation opportunities are equally valuable. CX leaders should prioritize conversational AI use cases using a two-dimensional framework: volume × resolution complexity. High-volume, low-complexity use cases deliver the fastest ROI and should be automated first. Low-volume, high-complexity use cases should typically remain human-handled, at least until conversational AI capability matures further.

Measuring the impact of conversational AI requires a measurement framework that captures both operational efficiency and customer experience quality — because optimizing for cost reduction alone will predictably degrade customer satisfaction, creating second-order business costs that outweigh first-order savings.

| Metric Category | Key Metrics | Measurement Method | Target Direction |

|---|---|---|---|

| Customer Experience | CSAT, NPS, CES, call abandonment | Post-call surveys, interaction analysis | ↑ Improve |

| Resolution Quality | First-contact resolution, re-contact rate, escalation rate | CRM tracking, call analysis | ↑ FCR, ↓ re-contact |

| Operational Efficiency | Cost per interaction, handle time, calls per hour | Cost accounting, telephony data | ↓ Cost, ↑ volume |

| AI Performance | Intent recognition accuracy, completion rate, latency | Platform analytics | ↑ All |

| Workforce Impact | Human agent utilization, interactions per agent, quality scores | WFM data, QA platform | ↑ Complexity handled |

| Business Outcomes | Revenue per call (sales), recovery rate (collections), conversion | CRM, revenue tracking | ↑ All |

Enterprise conversational AI measurement framework. Establish baseline for all metrics before deployment.

Technical implementation failures account for a minority of enterprise conversational AI project failures. The majority fail at the organizational level: inadequate change management, workforce resistance, insufficient executive sponsorship, or poor alignment between CX objectives and broader organizational priorities.

Effective enterprise change management for conversational AI deployments requires addressing three distinct stakeholder groups:

Synthesizing patterns from successful enterprise conversational AI deployments, the following playbook provides CX leaders with a structured implementation path:

Our enterprise success team will co-develop your conversational AI roadmap

The key is sequencing: deploy AI on your highest-volume, best-defined use cases first, where resolution paths are clear and customer expectations are straightforward. Maintain human backup for all AI interactions initially, and use transcript-based QA to identify and resolve failure modes before expanding to more complex use cases. Never deploy AI on sensitive or high-stakes interactions until performance has been validated at lower stakes.

The optimal restructuring model concentrates human agent capacity on Tier-2 and Tier-3 interactions: complex problem-solving, escalations, relationship-critical conversations, and high-value customer management. This typically means a smaller but higher-skilled agent workforce, with higher compensation and lower turnover — a significant quality improvement over traditional high-volume, high-churn agent models. Invest in reskilling existing agents before reductions.

Well-designed deployments consistently show CSAT improvement of 10–18 percentage points for AI-handled interactions, driven primarily by speed-to-answer improvement, elimination of hold times, and 24/7 availability. The caveat is implementation quality: poorly designed conversation flows or insufficient escalation pathways will produce negative CSAT impacts. Quality of implementation is the primary determinant of customer satisfaction outcomes.

Transparency and performance are the dual answers. Disclosing that a customer is interacting with an AI agent — required by emerging regulatory standards in many jurisdictions — establishes trust. But beyond disclosure, the most effective response to AI skepticism is performance: when an AI agent resolves a customer's issue faster and more accurately than they expected, objections dissolve. Build in simple human escalation requests for customers who remain uncomfortable, and monitor escalation rates as a leading indicator of conversation quality.

New enterprise data reveals a counterintuitive reality: AI voice agents are consistently outscoring human representatives on customer empathy benchmarks.

Everything C-suite leaders need to know about AI voice agents — from business case to implementation roadmap.

A head-to-head analysis of ten leading voice AI platforms evaluated against enterprise deployment criteria.